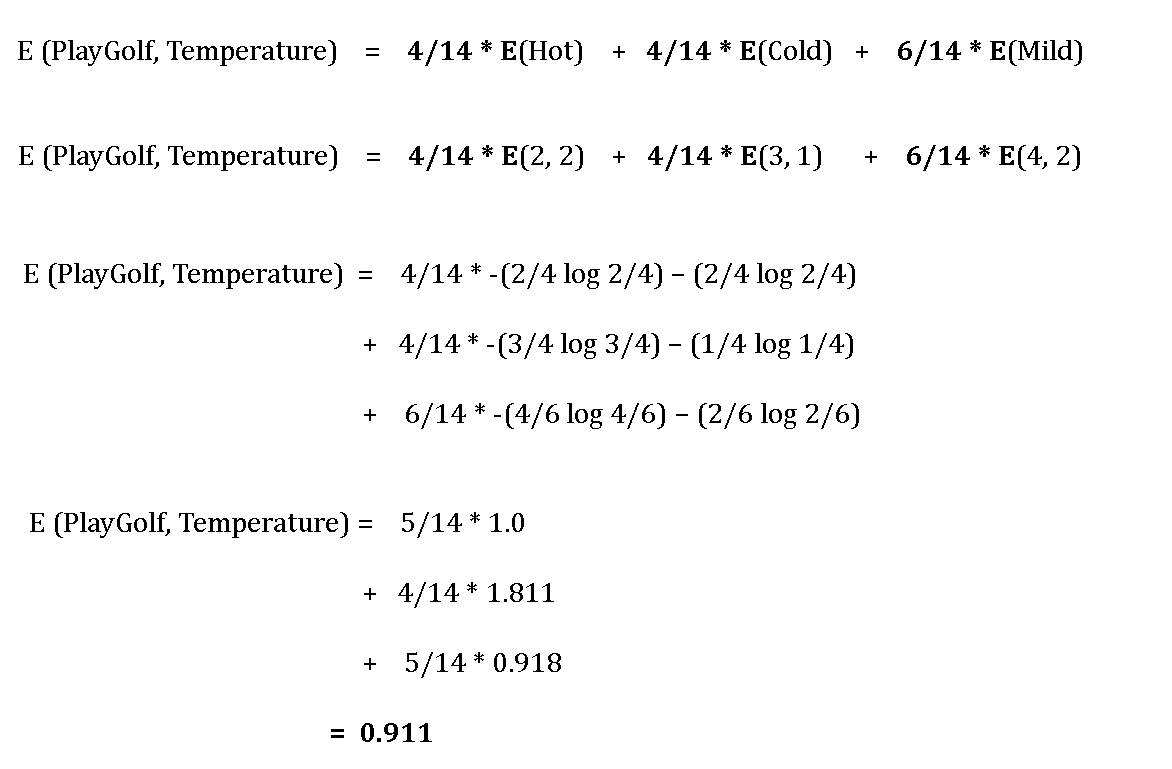

Information Gain is symmetric such that switching of the split variable and target variable, the same amount of information gain is obtained. Generally, it is not preferred as it involves ‘log’ function that results in the computational complexity. Given a probability distribution such thatĪnd where (p i) is the probability of a data point in the subset of □□ of a dataset □, Information Gain = Entropy before splitting - Entropy after splitting

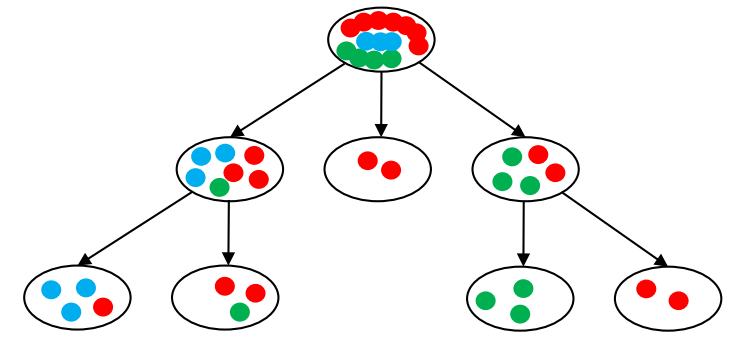

Information gain computes the difference between entropy before and after split and specifies the impurity in class elements. It follows the concept of entropy while aiming at decreasing the level of entropy, beginning from the root node to the leaf nodes. Information gain is used for determining the best features/attributes that render maximum information about a class. However, “Information gain is based on the information theory”. The concept of entropy plays an important role in measuring the information gain. In more simple terms, If a dataset contains homogeneous subsets of observations, then no impurity or randomness is there in the dataset, and if all the observations belong to one class, the entropy of that dataset becomes zero. It is computed between 0 and 1, however, heavily relying on the number of groups or classes present in the data set it can be more than 1 while depicting the same significance i.e. Here, if all elements belong to a single class, then it is termed as “Pure”, and if not then the distribution is named as “Impurity”. It characterizes the impurity of an arbitrary class of examples.Įntropy is the measurement of impurities or randomness in the data points. In the blog discussion, we will discuss the concept of entropy, information gain, gini ratio and gini index.Įntropy is the degree of uncertainty, impurity or disorder of a random variable, or a measure of purity. Some of them are gini index and information gain. In order to check “the goodness of splitting criterion” or for evaluating how well the splitting is, various splitting indices were proposed. However, pure homogeneous subsets is not possible to achieve, so while building a decision tree, each node focuses on identifying the an attribute and a split condition on that attribute which miminzing the class labels mixing, consequently giving relatively pure subsets. This structure holds and displays the knowledge in such a way that it can easily be understood, even by non-experts.” ( From) The 'knowledge' learned by a decision tree through training is directly formulated into a hierarchical structure.

This process of classification begins with the root node of the decision tree and expands by applying some splitting conditions at each non-leaf node, it divides datasets into a homogeneous subset. These algorithms are constructed by implementing the particular splitting conditions at each node, breaking down the training data into subsets of output variables of the same class. Decision Trees are supervised machine learning algorithms that are best suited for classification and regression problems.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed